Your agents are failing. Your traces won't tell you why.

Moyai connects to Langfuse or LangSmith in minutes, finds behavioral anomalies in your traces, and tells you which ones are actually broken.No new SDK. No rules to define. No manual log review.

Connects to your existing trace stack

No new SDK. Moyai reads the traces you already generate.

The problem

You have observability.You don't have reliability.

Your traces show what your agent did. They don't tell you when it did something wrong. That gap is where failures compound, silently, across every similar request.

Your traces show what happened. Not what's broken.

Langfuse shows you 10,000 traces. Which ones have problems? Your team stares at logs for hours hoping patterns emerge.

Real failures hide in the volume because observability tools show everything — not what matters.

Your evals pass. Your agent still fails.

You wrote evals for the failures you anticipated. The ones you didn't anticipate ship to production.

A wrong tool call, a subtle context loss, a confident wrong answer — these don't trigger alerts because nobody wrote a rule for them.

Your customers are your monitoring system.

The gap between "something broke" and "you noticed" is measured in support tickets and lost trust.

By the time a user complains, the failure has been compounding across every similar request for days.

Ready to catch silent agent failures?

See what Moyai finds in your traces.

The solution

Find what's broken. Know why. Fix it first.

Moyai reads your existing agent logs, surfaces behavioral anomalies, judges whether they're real failures, and routes problems to your team — fully automatic, no rules to define.

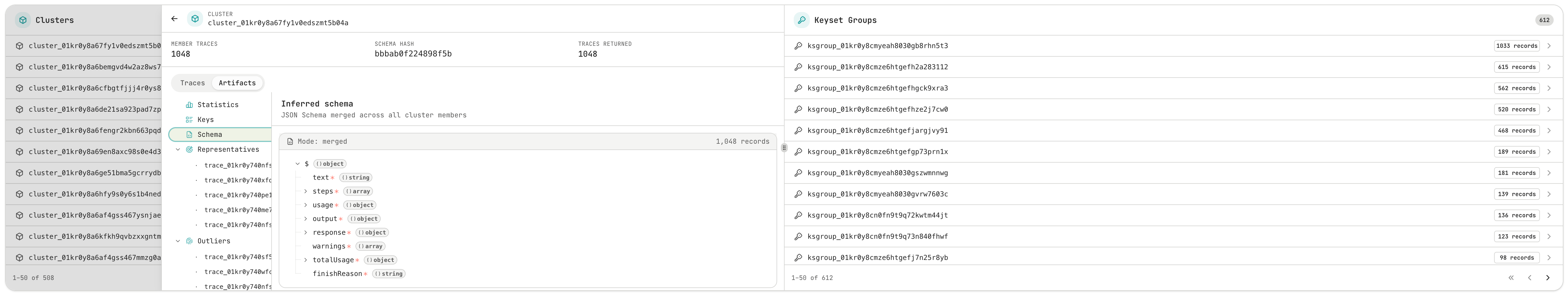

Cluster

Surface anomalies your evals will never catch.

Structural and semantic clustering groups your traces by behavior — then finds the outliers. No rules to write. No failure definitions upfront. Moyai finds what's different and surfaces what actually deviates from your agent's normal behavior.

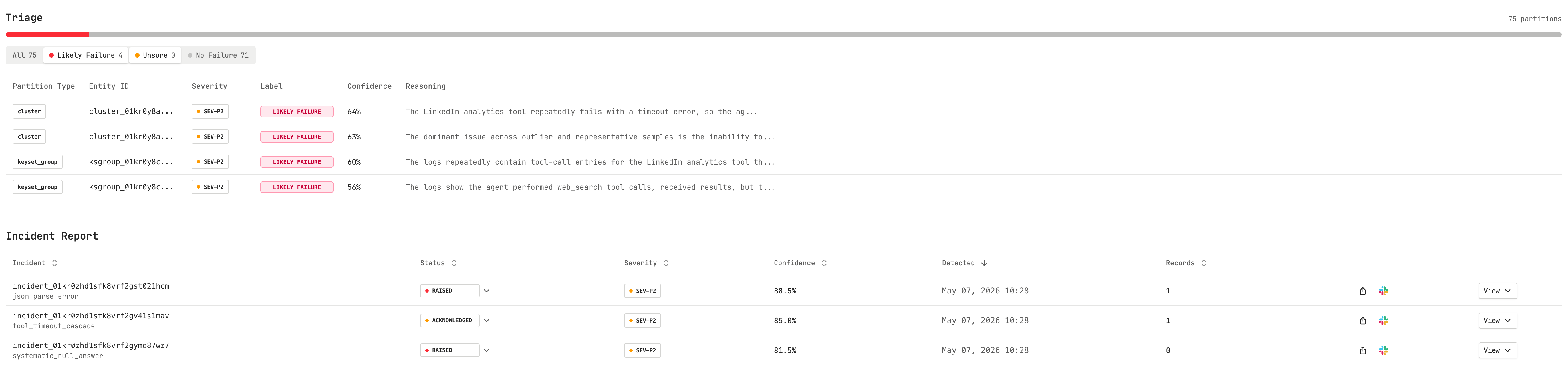

Evaluate

Not every anomaly is a failure. We figure out which ones are.

Agentic judges — not a single LLM-as-judge call — reason step by step about whether an outlier is a real problem or just a behavioral shift. Fully automatic: Moyai evaluates whether there were agentic failures in generating the result — no configuration, no rules to define.

Alert

Know what broke, why it broke, and who should fix it.

Every alert comes with reasoning — not just "something changed" but what failed, which traces are affected, and the likely root cause. Routes to the right person in Slack. Your team gets activated, not just notified.

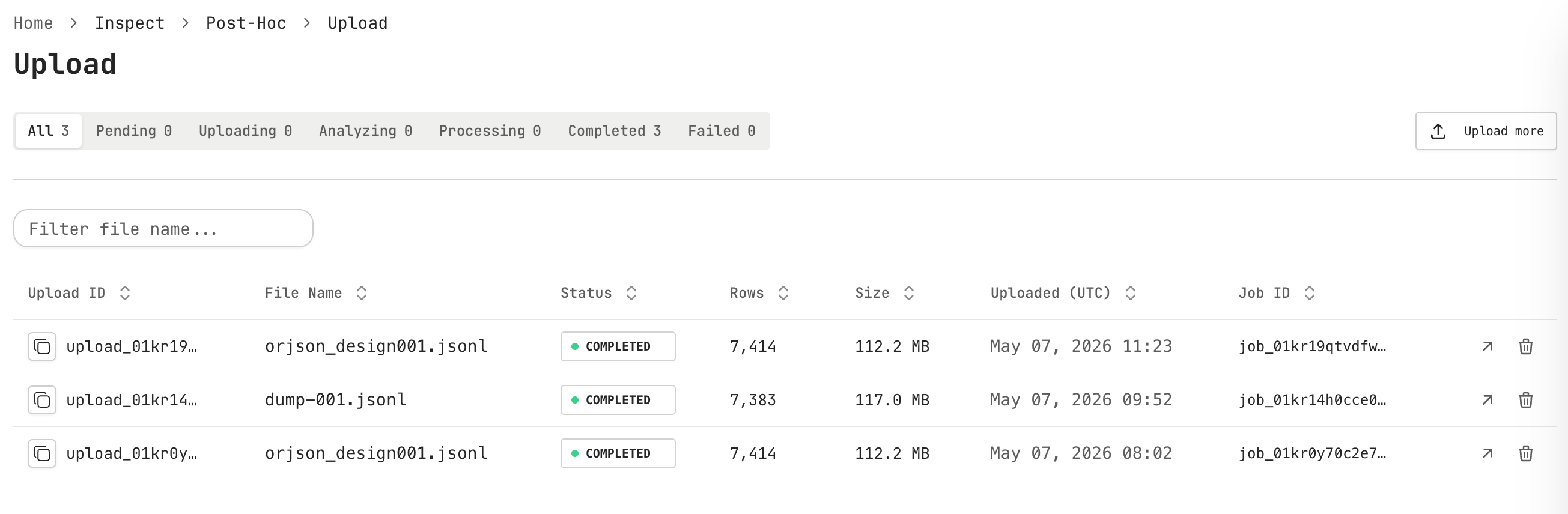

Integrate

Keep your stack. Add reliability.

Connect to Langfuse, LangSmith, or any OpenTelemetry-compatible tool. No new SDK. Minimal code changes. Moyai reads the logs you already generate — you get reliability in minutes, not weeks.

Already have traces?

See what Moyai finds in your traces.

Compatibility

Connect your existing stack in under 5 minutes

No SDK to install. Moyai reads the traces you already generate.

FAQ

AI agent reliability monitoring, without replacing your stack.

What does Moyai monitor?

Moyai analyzes production AI-agent traces to find behavioral anomalies, judge which anomalies are real failures, and turn them into incidents your team can inspect and fix.

Does Moyai require a new SDK?

No. Moyai is designed to read traces you already generate from tools like Langfuse, LangSmith, or OpenTelemetry-compatible pipelines.

How is Moyai different from tracing or observability tools?

Tracing tools show what happened. Moyai focuses on reliability: it clusters similar behavior, highlights outliers, evaluates whether they are customer-visible failures, and explains what likely broke.

Does Moyai work with Langfuse and LangSmith?

Yes. Moyai can connect to Langfuse and LangSmith traces so teams can add failure monitoring without replacing their existing observability workflow.

Observability tells you what happened. Reliability tells you what's broken.